Google AI, Bad LLM Math, and More // BrXnd Dispatch vol. 005.5

End-of-week links roundup at the intersection of brands and AI

Hi everyone. Welcome to the BrXnd Dispatch: your bi-weekly dose of ideas at the intersection of brands and AI. I’m trying to settle into a routine with this, so my thought is one email with ideas and another with links. This is the links roundup. I hope you enjoy it and, as always, send good stuff my way.

[Google Blog] Google is about to make lots of AI announcements. The whole conversation that Google was getting left behind by ChatGPT was always insane to me. So much of the tech that everyone uses was developed by Google researchers, and they’ve had AI in search for a long time. The more interesting question to me is why they didn’t want to be the first ones to release this stuff.

[Gizmodo] CNET Is Reviewing the Accuracy of All Its AI-Written Articles After Multiple Major Corrections. Not much to say about this one. It’s hard to get content straight out of these large language models that don’t include some inaccuracies. They’re particularly bad at math, where it seems like they should know what they’re saying, but they’re making predictions, not calculations.

[Scale Blog] To that end, some folks at Scale AI have a good writeup on Claude, a new AI chatbot from Anthropic. This bit comparing Claude and ChatGPT on mathematical reasoning was particularly good:

To show mathematical thinking skills, we use problem 29 of the Exam P Sample Questions published by the Society of Actuaries, typically taken by late-undergraduate college students. We chose this problem specifically because its solution does not require a calculator.

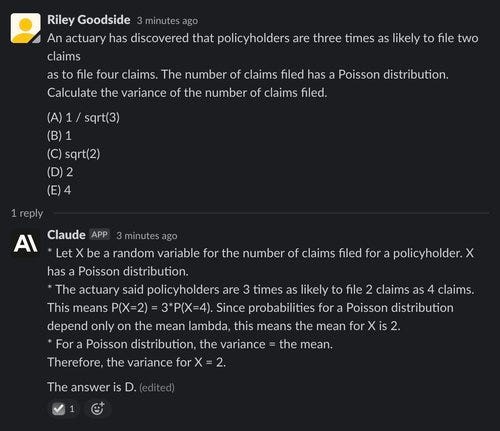

ChatGPT struggles here, reaching the correct answer only once out of 10 trials — worse than chance guessing. Below is an example of it failing — the correct answer is (D) 2:

Claude also performs poorly, answering correctly for only one out of five attempts, and even in its correct answer, it does not lay out its reasoning for inferring the mean value of X:

We will use AI like the conductor of a symphony — we are in control, creatively guiding to a final composition. Great conductors will innovate and create marvelous things. Great conductors will be the architects, the designers, and the directors of mastering all of the aspects of working with artificial intelligent creative tools.

[a16z.com] Interesting writeup from Andreesen Horowitz on what the generative AI market will look like. This bit particularly stood out:

There don’t appear, today, to be any systemic moats in generative AI. As a first-order approximation, applications lack strong product differentiation because they use similar models; models face unclear long-term differentiation because they are trained on similar datasets with similar architectures; cloud providers lack deep technical differentiation because they run the same GPUs; and even the hardware companies manufacture their chips at the same fabs.

[The Verge] Let the lawsuits begin: Getty Images is suing the creators of AI art tool Stable Diffusion for scraping its content. I have no clue where this stuff is going to end up, but this clearly isn’t a good look:

[MIT Press] This book on art history and machine learning sounds fascinating:

Though formalism is an essential tool for art historians, much recent art history has focused on the social and political aspects of art. But now art historians are adopting machine learning methods to develop new ways to analyze the purely visual in datasets of art images. Amanda Wasielewski uses the term “computational formalism” to describe this use of machine learning and computer vision technique in art historical research. At the same time that art historians are analyzing art images in new ways, computer scientists are using art images for experiments in machine learning and computer vision. Their research, says Wasielewski, would be greatly enriched by the inclusion of humanistic issues.

[Dale on AI] Want to go deeper? This write-up of transformers from Dale Marowitz is excellent. An excerpt:

A Transformer is a type of neural network architecture. To recap, neural nets are a very effective type of model for analyzing complex data types like images, videos, audio, and text. But there are different types of neural networks optimized for different types of data. For example, for analyzing images, we’ll typically use convolutional neural networks or “CNNs.” Vaguely, they mimic the way the human brain processes visual information.

That’s it for this week. Thanks for reading, and please send over any links you think are worth reading. Also, while I have you, I’m actively looking for sponsors for my BrXndCon event in mid-May in NYC. If you’re interested, please reach out.

— Noah