Brand Experiments on Midjourney // BrXnd Dispatch vol. 010.5

What does Midjourney *think* of various brands? Plus lots of links.

Hi everyone. Welcome to the BrXnd Dispatch: your bi-weekly dose of ideas at the intersection of brands and AI. Friday is usually reserved for links, but today we have something special for you. Ed Cotton is an old friend from the heady days of mid-2000s marketing blogs. He’s a serious thinker on how brands work, and after posting a bunch of fascinating Midjourney brand experiments, I asked if he would be up for writing it up for the newsletter. Ed is a brand consultant and former Chief Strategy Officer of BSSP. You can find him on LinkedIn. - Noah

Most people use AI art generators like Midjourney to create a single impressive-looking image—and a whole micro-industry has grown up to help people refine their prompt craft.

When I saw these results, however, I wanted to know something different. I was more interested in understanding how the AI “sees” brands. What visual information does it have on brands, and how does that visual information surface?

We know the Midjourney has been trained on hundreds of millions of images, and it’s been tweaked to produce a certain type of image suited to sci-fi, fantasy, and gaming. So while it’s not fully representative of a total universe of images, it’s very interesting to see “what it sees.”

I have run various experiments, and one of the most intriguing has been brand movie poster generation, which seems to be a good match with Midjourney’s focus on dramatic art.

Here Midjourney knows the tropes of movie posters, and it searches for characters and elements to populate them with.

It’s an excellent way to find what the AI believes are the most prominent elements of the brand.

Do these with enough brands, and the results tell a powerful story about marketing and brand-building.

Key differences between brands

Perrier and Evian

An art deco history vs. a youthful elixir.

FedEx and UPS

The people (almost like old-school police) vs. the giant logistics machines. Bank of America and Citibank

The thrusting and dynamic bankers vs. a faceless and intimidating concrete institution.Google and Facebook

The youthful and spirited adventure of Google vs. the horror of the mental distress of FacebookBrands trapped in iconic pasts

Rolls Royce and Dos Equis

Dos Equis cannot escape the iconic “world’s most interesting man,” and Rolls Royce appears stuck in a long-lost aristocratic past.

Misc Surprises

Samsung and Hyundai

Korean brands don’t share their provenance with us in their US communication, but it clearly shows up on Midjourney.

AT&T and Comcast

Both are seemingly trapped in a very strange retro-tech past.

While neither science nor truly pure art, these experiments on Midjourney are a way to learn and see what’s inside the AI and perhaps to help illuminate some hypotheses about brands and categories.

To test our thinking and to find some dark and mysterious corners that exist in the AI, that no consumer or client is going to want to talk about.

I believe it could be handy for consumer research—respondents can use it to bring to life their thoughts and feelings in exciting ways that crappy scrap art mood board exercise in focus groups never really delivers.

I will undoubtedly be using Midjourney in future qualitative research projects.

A big thank you to LinkedIn, Redscout, and Otherward for sponsoring the upcoming BrXnd conference and my work. If you would like to support us, I have various sponsor levels available for the event. Be in touch (or just reply to this newsletter), and I’m happy to send over the details.

And now some links …

Some links and thoughts from the intersection of brands and AI. If you have good links for me, please send them my way by replying to this email, leaving a comment, or sending an email.

[Github] When people talk about AI stuff, sometimes I feel like they’re speaking a different language. Not the technical folks, although I certainly have large gaps there, but it’s more when people are talking about use cases, ChatGPT prompts, and the like, and I just feel like they’re using a different set of tools than I am.

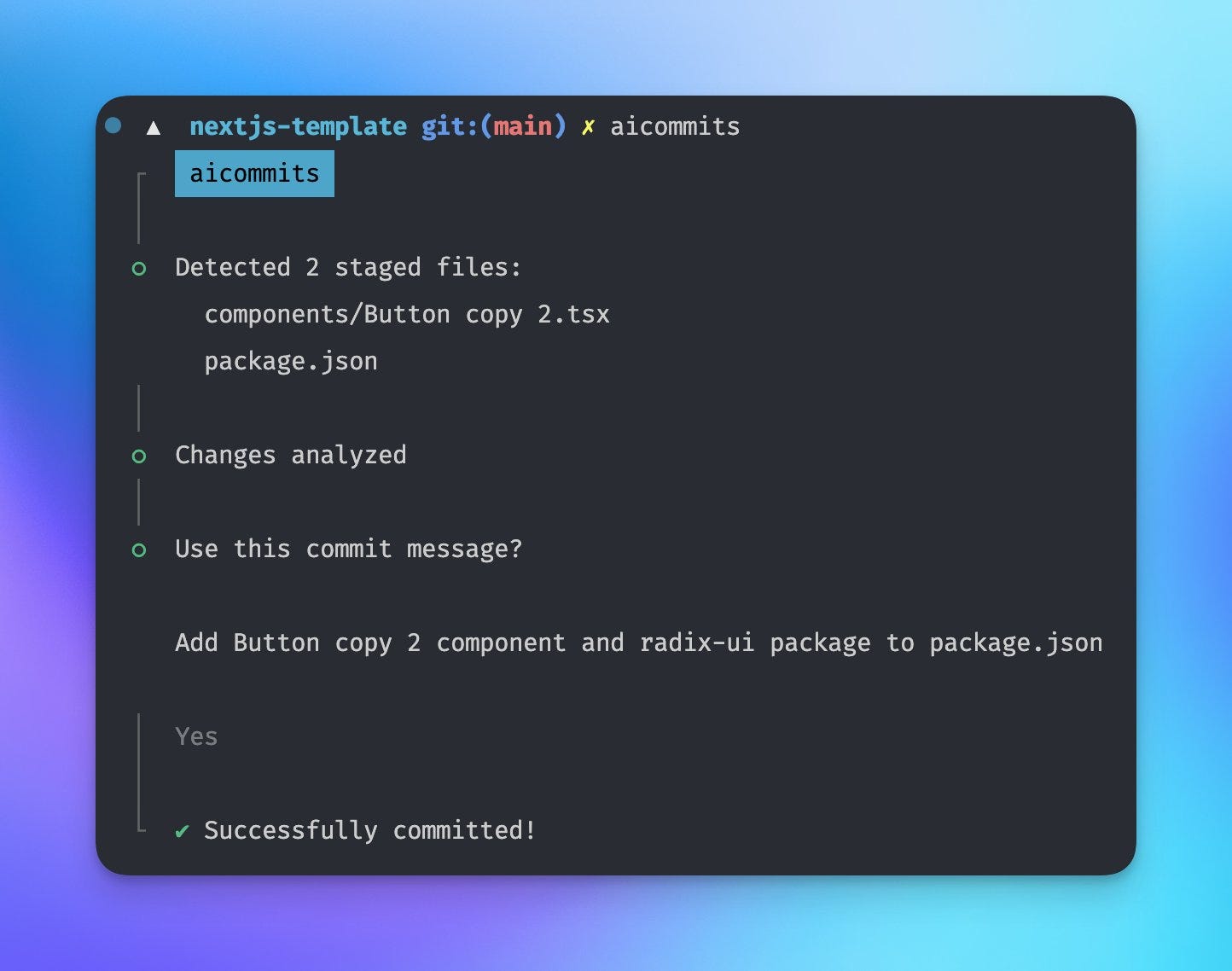

In my experience, having spent quite a bit of time looking at the output of GPT3, the best use cases for large language models right now aren’t writing articles or blog posts, but doing much simpler stuff like scraping. Part of that is the much-discussed hallucinations these models are prone to—the thing where they just make stuff up out of thin air. Using it for extracting data for scraping works particularly well because the model has less chance to make stuff up when you give it rigid input to work with. To that end, one of the most exciting new AI implementations I’ve run into recently is this AI commit writing package from Hassan El Mghari, a senior developer advocate at Vercel (which I also love, FWIW).

What does it do? Every time you write code, you need to commit the updates to your code repository. When you do that, you must include a message explaining what changed. This is one of those little chores that feels overwhelming sometimes. After spending a bunch of time updating something, the last thing you want to do is write an intelligent commit message that explains all the changes. And so many commit messages end up with something like “code updates.” This is definitely not a best practice, but it’s a reality sometimes. This package looks at the code “diff” (the changes between the new version and the previous one) and comes up with a smart message that explains what changed.

That’s it. Very narrow use case, specific data, and human in the loop to confirm the message (that last piece isn’t necessary most of the time, but it still misses around one out of ten times). I strongly suspect these sorts of little AI tools will make a much larger short-term impact than the larger ones everyone is focused on.

[AdAge] Nice writeup of AI brand collabs in Adage with some quotes from me.

The popularity of fake brand collabs has led to another issue, Brier said. Now, when collabs launch, people can’t tell if they’re for real or AI. “Whenever a brand does a real collab,” Brier said, “people now send it to me because they think it’s my site.”

If you’re one of these people, I appreciate you!

[A16Z] Andreessen-Horowitz invested in Replicate, which runs various open-source models and provides an API. Here’s Replicate in BrXndscape, my marketing AI landscape.

[MatthewButterick.com] Camera Obscura: The Case Against AI In Classrooms is worth a read. I’m pretty against most of the knee-jerk reactions to the use of these models in classrooms, but this is a worthwhile point:

Broadly speaking, what we’re teaching students with writing assignments is how to make a persuasive argument. And the raw material of persuasive arguments is credible evidence. What makes evidence credible? Often, the source of the evidence. Thus, learning how to gather sources and weigh their credibility is a hugely important writing skill for every student, up there with learning how to avoid plagiarism.

Much of this comes back to the need for better media literacy. One interesting question I’ve been discussing with a few friends is whether the type of media literacy needed for AI differs fundamentally from the one needed earlier.

[Matt Rickard & Langchain] Why Python Won’t Be the Language of LLMs came out the same week Langchain, a toolset for working with large language models, was released as a Typescript package. I had played with Langchain before, but I do most of my work in Typescript, so this release has been a huge help. There are a bunch of basic functions in Langchain, like splitting text by the number of tokens, that I had written myself and am now happy to replace with some out-of-the-box functionality.

[Runway ML] Gen 1, a new video model from Runway, looks really impressive.

Thanks for reading. If you want to continue the conversation, feel free to reply, comment, or join us on Discord. Also, please share this email with others you think would find it interesting.

— Noah