AI Brand Distillations // BrXnd Dispatch vol. 006.5

On brand aesthetics and understanding, plus lots of good links.

Hi everyone. Welcome to the BrXnd Dispatch: your bi-weekly dose of ideas at the intersection of brands and AI. My plan was to keep the .5 end-of-week edition to just links, but there’s just too much good stuff to talk about at the intersection of brands and AI. So for today’s Dispatch, we’re joined by Anita Schillhorn van Veen, who, amongst many other things, is the Director of Strategy at McKinney in Los Angeles. Anita also writes the excellent Framing newsletter. What follows is an essay she originally wrote for her newsletter and was kind enough to let me run here. Thanks, Anita! And thanks to all of you for reading. Please share this newsletter with friends and colleagues who might find it interesting.

Brand collabs have become a staple of marketing; they’ve become so ubiquitous that even Skechers has a page dedicated to their collabs.

I’ve been playing around with brxnd.ai, a brand collaboration tool that uses generative AI to write content and create imagery. Yielding creative decisions to DALL·E 2 and GPT-3 provides interesting starting points for what a brand collaboration can be; it also generates fascinating observations about the brands themselves. Noah documented some of his initial observations here.

On the visual side, the AI distills each brand to its most recognizable form, thereby separating brands with strong visual identities from those with weaker ones. It creates strange concoctions.

First, let’s get visual.

Some evoke what could be possible, especially for brands with powerful visual identities. For example, here’s the Doritos logo:

And here are three Doritos brxnd.ai examples, in which the toxic orange color and the triangle shape (both of the chip and the brand) are prominent.

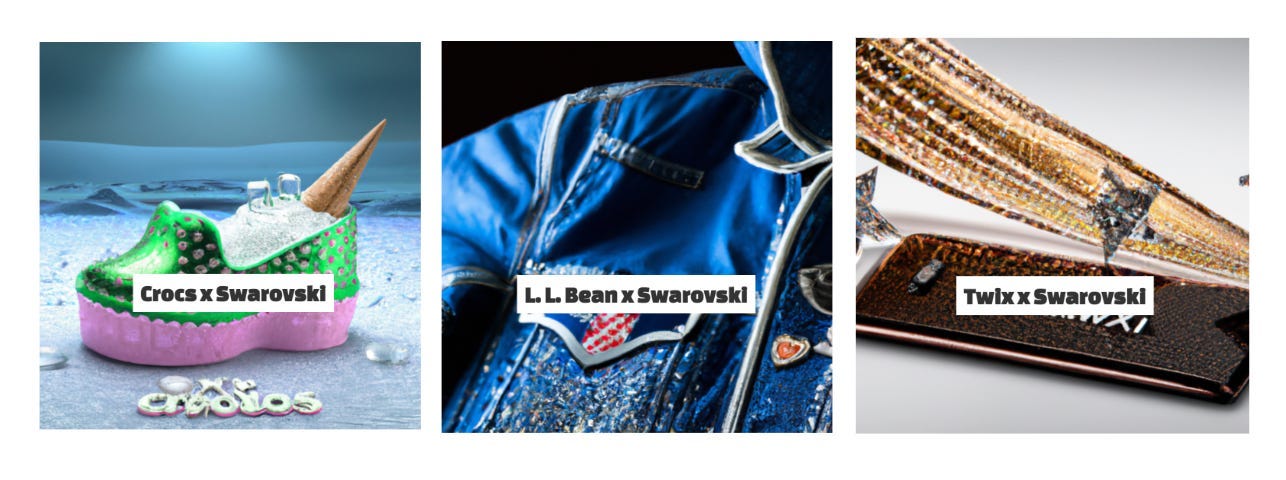

AI finds a different brand element that’s central to Swarovski. There’s little sign of the logo, but bling is the thing.

Hello Kitty is such a powerful visual that she overwhelms a few brands, even those with iconic colors or characters like Little Caesars and Burger King.

However, in other collabs, the adorable Japanese kitten gets abstracted into just her ribbon and/or bits of her pink and red colorways.

Some AI collabs are remarkably less awful than what made it into actual reality.

Jimmy Choo x Timberland Collab: IRL vs. AI

And many are curiosities, distant cousins to the brand elements you might expect, or even complete head-scratchers.

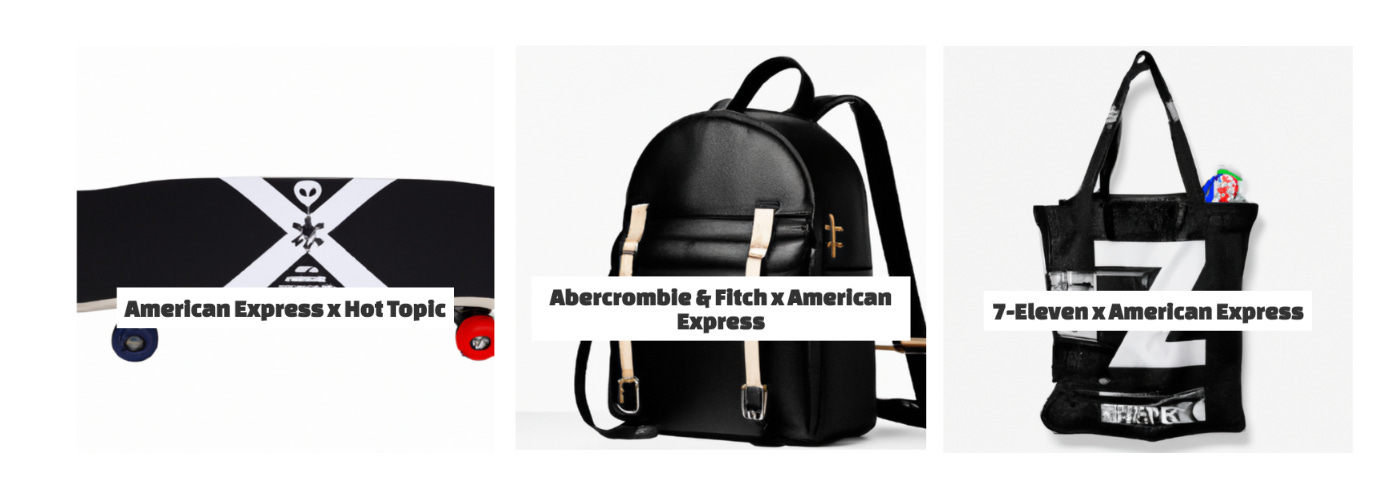

Although the logo or other brand elements don’t readily appear, American Express collabs seem to capture some of the brand’s sophistication by gearing towards black.

The Write-Ups

So, what is not being captured by the visual component of the AI? The ethos of the brand—something that sits beyond the recognizable. Here’s where the text comes in. It often captures the frothy descriptions of each brand and the brand purpose of them coming together in an upbeat salesy tone [editor’s note: the marketing copy is one part of the system that I used a fine-tuned model for, training it off hundreds of actual collab announcements. - Noah]. Here’s an absurd descriptor of a Swarovski x Instagram candy bar—blingy, feed-worthy, delicious:

Looking for the perfect Instagram-worthy snack? Look no further than the new Swarovski candy bar! This limited edition treat is made with real Swarovski crystals and is sure to turn heads when you post a pic of it. With a delicious chocolate taste and beautiful crystal design, this candy bar is a must-have for any Instagrammer. So don't wait – grab a Swarovski candy bar today!

And here’s one from Rolex x Grateful Dead. It notes conversion vans and jam bands, as well as Rolex’s classic design, quality, and accuracy:

The Rolex and Grateful Dead watch collaboration is the perfect accessory for any fan of the iconic jam band. The watch's classic design and high-quality materials are perfectly suited to any style, whether you're hitting the road in your conversion van or hitting the town with your buds. With accurate timekeeping and a durable construction, this watch will keep you on beat all day long.

These collabs are AI pulling together what’s most iconic or known about both brands into one product write-up. But between the cracks of what the AI engines develop is where you can find what’s missing. So what makes these AI collabs feel not quite there?

Many IRL collabs are of the same ilk: fast ways to combine the powers and audiences of two brands with little true consideration of what it will mean for the brand. For example, here’s a write-up from an all-too-real Puma x Frosted Flakes collab.

To honor 70 years of Tony the Tiger, we teamed up with Kellogg’s on PUMA x FROSTED FLAKES. This sweet collaboration celebrates the iconic cereal and its beloved mascot with fun twists on Classic styles, including the Suede and Roma. Bright orange, vertical black stripes, and vintage graphics ensure the collection pieces look GR-R-REAT!

Brands like Liquid Death have found their way to poking fun at our obsession with collaborations. Here’s their collab with Tony Hawk, a world-famous skateboarder that donated bits of his blood for every skateboard.

Liquid Death’s collab with Tony Hawk—his literal blood in every board

What brxnd.ai gives marketers, besides simply imagining collab possibilities, is a way to read the power of a brand and what it stands for.

Noah’s response: I am so happy to see this experiment spark thoughts and discussion like this. What was striking to me as I was building this out originally was how the AI clearly understood and misunderstood brands in interesting ways. Next week I’ll write about some updated experiments in this realm as I try to continue to pull apart precisely what these systems have intuited about brands from all the content they’ve consumed.

And now some links …

Some links and thoughts from the intersection of brands and AI. If you have good links for me, please send them my way by replying to this email, leaving a comment, or sending an email.

[ZDNet] Yann LeCun, Meta’s Chief AI Scientist, had some interesting stuff to say about AI this week. First, he doesn’t find what OpenAI is doing all that innovative. "It's nothing revolutionary,” he explained on a press call last week, “although that's the way it's perceived in the public … It's just that, you know, it's well put together, it's nicely done."

Although that quote got most of the attention, this was much more interesting to me:

"There's something like 12 million shops that advertise on Facebook, and most of them are mom and pop shops, and they just don't have the resources to design a new, nicely designed ad," observed LeCun. "So for them, generative art could help a lot."

Facebook helping its vast number of customers generate better ads with AI makes lots of sense.

[Futurism] Google seems ok with AI-generated content? From Futurism:

"Our ranking team focuses on the usefulness of content, rather than how the content is produced," the company's Public Search Liaison Danny Sullivan said at the time. "This allows us to create solutions that aim to reduce all types of unhelpful content in Search, whether it’s produced by humans or through automated processes."

[Investing in AI] Good post from Rob May on The Forces Driving The Economics Of Generative Media. This one, in particular, has been on my mind lately:

Limitations on further training data - I’ve heard that GPT-4 was trained on so much public text data that OpenAI isn’t sure where to go next to get an order of magnitude more. Whether that is true or not, it definitely will become an issue at some point. Will it matter for the economics of generative AI? Maybe the OpenAI-Microsoft partnership will help OpenAI get access to more private data (corporate Word docs?) that help continually push the training data set to a larger scale. But at some point it starts getting hard to find more data. Maybe the models will be so good that we don’t care and pushing them forward isn’t an imperative. Maybe we have humans label certain data types at mass scale to help the models, in which case the time to do so could be the bottleneck to the next level of breakthrough.

[Garbage Day] Good issue on Garbage Day on AI. This sounds about right, except I suspect it will be less than a year instead of the three it took to get to the iPhone:

The way I see it, in terms of where we are in the evolution of A.I., is that we’re basically in that awkward middle ground between the launch of Facebook in 2004 and the first iPhone in 2007. There are a lot of people excited about this stuff and there is a similar amount of people who are terrified of what it could do to us. And a whole bunch more who have never used any of these tools and have no idea where to begin, but once it’s easy enough, won’t even think twice. Because it’ll be fun or good for business or, probably more likely, because it’ll eventually come by default in our devices and popular services.

[Anthropic] Wondering what the market salary is for a prompt engineer? Anthropic is hiring one for $250-335k.

[Twitter] The first NeRF (neural radiance field) in a TV commercial?

[Entropy] The former head of product for OpenAI on testing models by “vibes:”

From a benchmarking perspective, there are a number of interesting things here. It’s rather wild to consider that state of the art is “testing by vibes”, yet this is true. You sort of poke around to get a ~feeling~ and once you’ve spelunked enough you yolo into production.

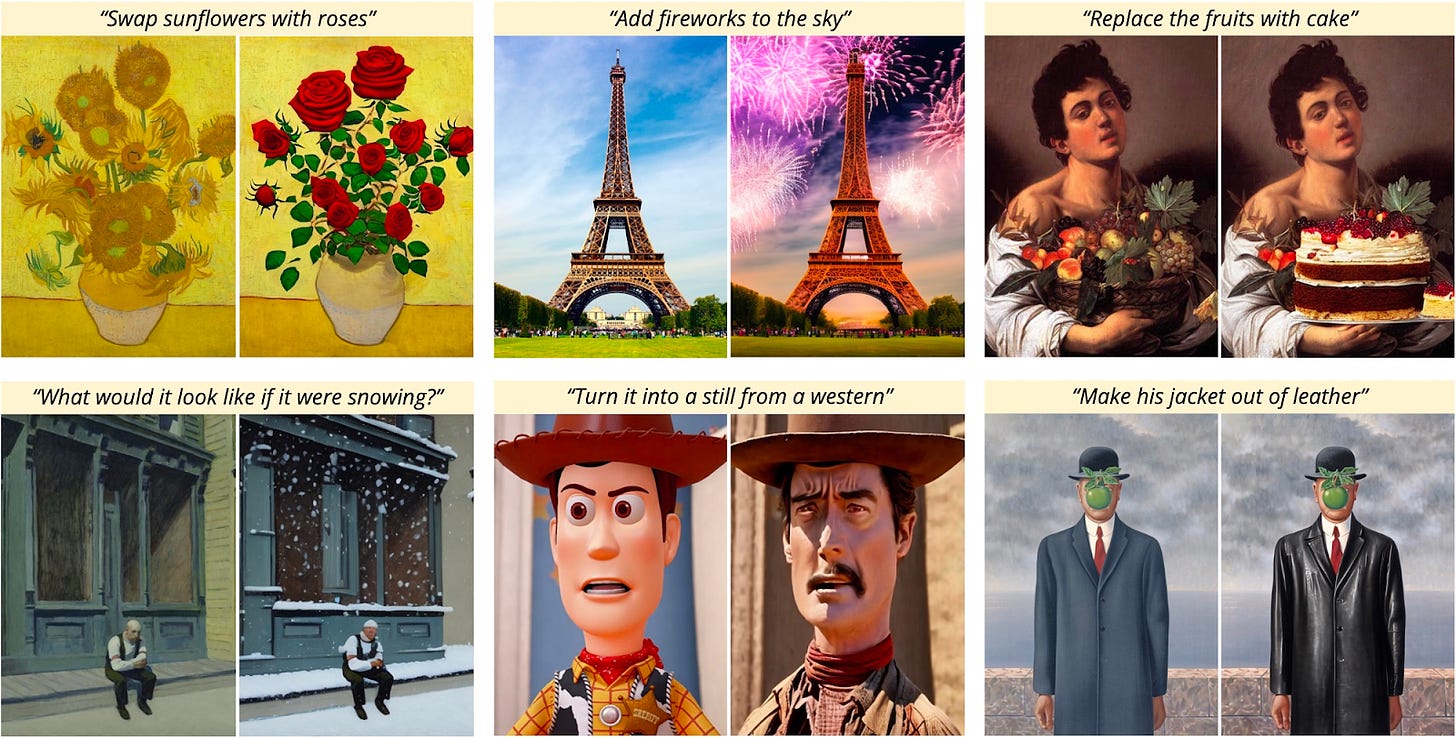

[TimothyBrooks.com] Instructpix2pix is a new image generation model that allows you to upload an image and adjust it using a prompt.

You can try the model on Hugging Face or at PlaygroundAI, which already seems to have implemented it (or something like it).

[PR Newswire] Shutterstock announced an AI image generator. While the announcement is a bit short on details, this bit seems intriguing:

CONFIDENCE: We're the first to support a responsible AI-generation model that pays artists for their contributions, making us your trusted partner for generating and licensing the visuals you need to uplevel your brand. Also, we have thoughtfully built in mitigations against the biases that may be inherent in some of our datasets, and we are continuing to explore ways to fairly depict underrepresented groups.

[FPO Kenny] Creative Director Kenny Friedman, who I had the pleasure of catching up with this week, is working on some fun AI-generated characters inspired by Humans of New York.

[Twitter] This thread of AI glitches is … creepy? This is one of my favorites/it’s hard to look away.

That’s it for this week. Thanks for reading, and please send over any links you think are worth reading. Also, while I have you, I’m actively looking for sponsors for my BrXndCon event in mid-May in NYC. If you’re interested, please reach out.

— Noah